Gesture controlled instrument for collaborative music creation. This instrument is an application that runs in a web browser. It is capable of tracking user’s hand motion using a webcam.

Background

Global pandemic has forced us to distance physically. In this project the goal was to counteract the negative effects of physical distancing. Since music is a universal language, it creates a common ground for a collaboration between people with various cultural backgrounds. Moreover, the use of body movements can enhance social engagement and help develop communication between people. The idea behind this project was to create an easily accessible interactive multi-user application. In this application people can play music together using hand movements – similarly like it is done with physical instruments. The project’s main goal is to connect people locally and globally via a collaborative interactive musical instrument.

Tools

I have completed this project together with my friends from the Aalborg University. We have created this project in Unity. Our target format was WebGL. We have made the computer vision and audio engine parts in JavaScript. For multiplayer we have decided to use Photon Unity Networking Package. We have used particle system for creating visuals. We have divided the work between team members. Chrysoula Kaloumenou was responsible for visuals. Sofia Lamda was dealing with computer vision. Panagiota Pouliou have taken the multiplayer implementation. My main responsibility in this project was creation of an audio engine that could run in an internet browser. The Instrument had to be controllable by multiple users in real time.

The idea behind the collaborative instrument

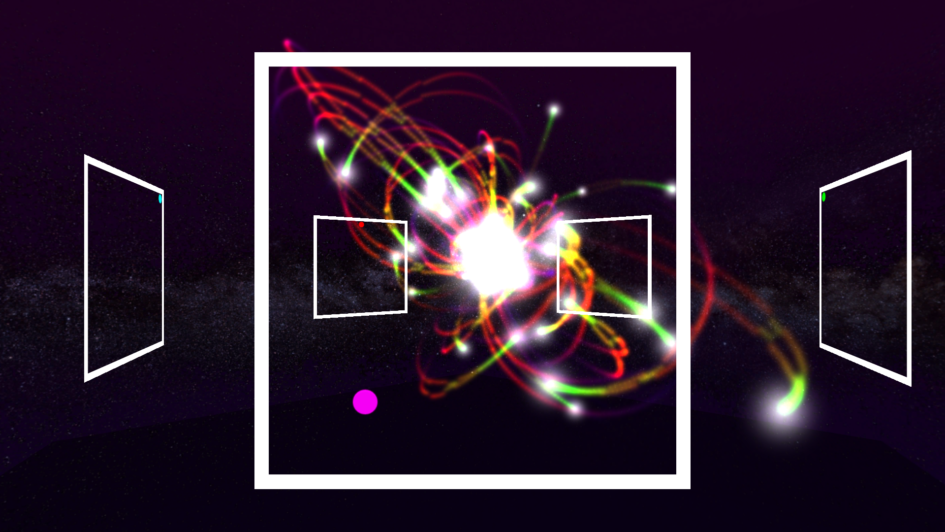

Our idea for the collaboration was that each user will have access to two separate parameters of the instrument. In terms of the control interface we have decided that each user will see two-dimensional plane in front of him. This decision came from our idea of using computer vision to control the parameters. Position of user’s hand in front of the web cam will be mirrored by the position of the dot on the plane. Each coordinate of the dot represents different parameter. As a part of our instrument we also wanted to feature visuals that would react to the audio. I have made a simple prototype of the interface and visuals in Pure Data.

Audio engine of the instrument

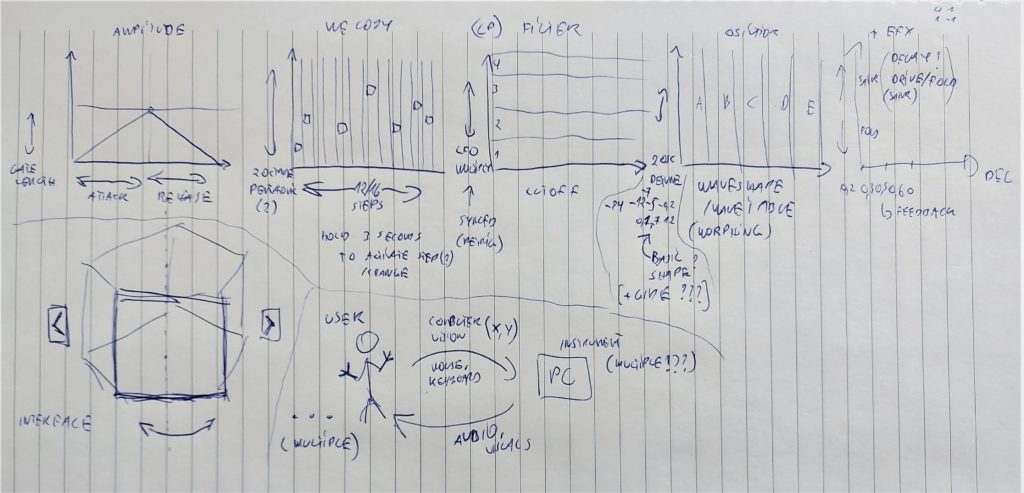

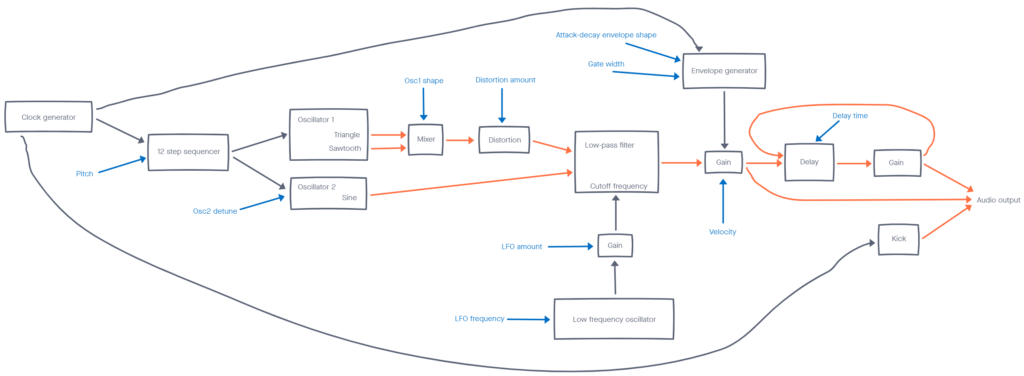

I have began my work from sketching building blocks of the instrument on paper.

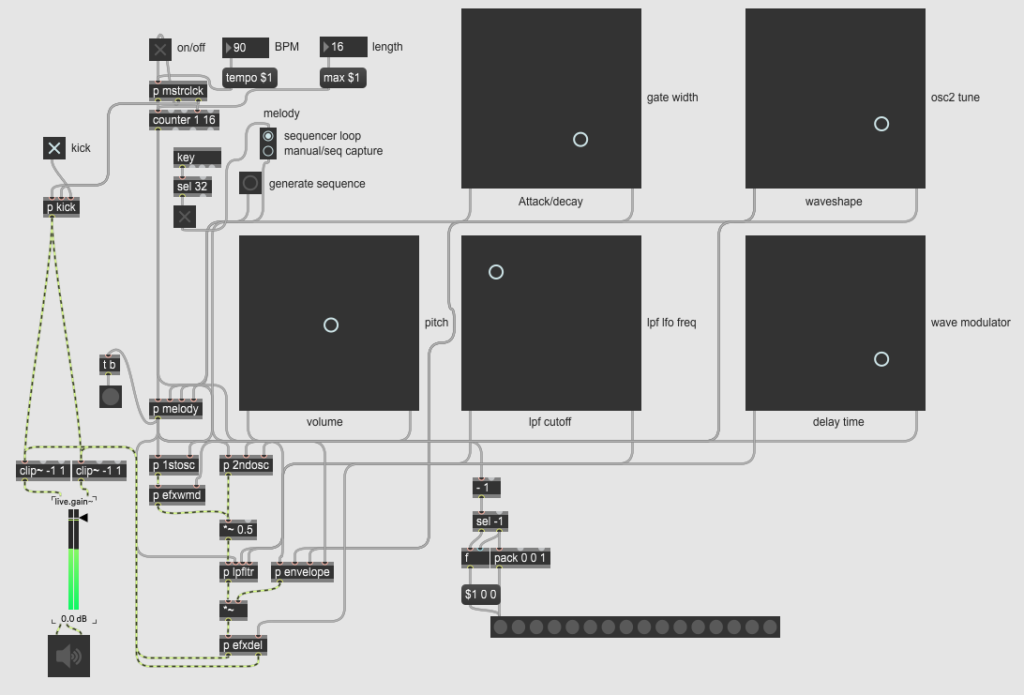

Above sketch might seem chaotic but it shows how each user will be able to control separate aspects of the instrument. I have decided that I will create a simple subtractive synthesizer featuring two oscillators and a delay effect. The melody will be controlled using a sequencer. Once I had the overview of what I wanted to achieve, I have made a decision to create a prototype. I have decided to use Max MSP since it is the fastest and most convenient tool for me to work with audio.

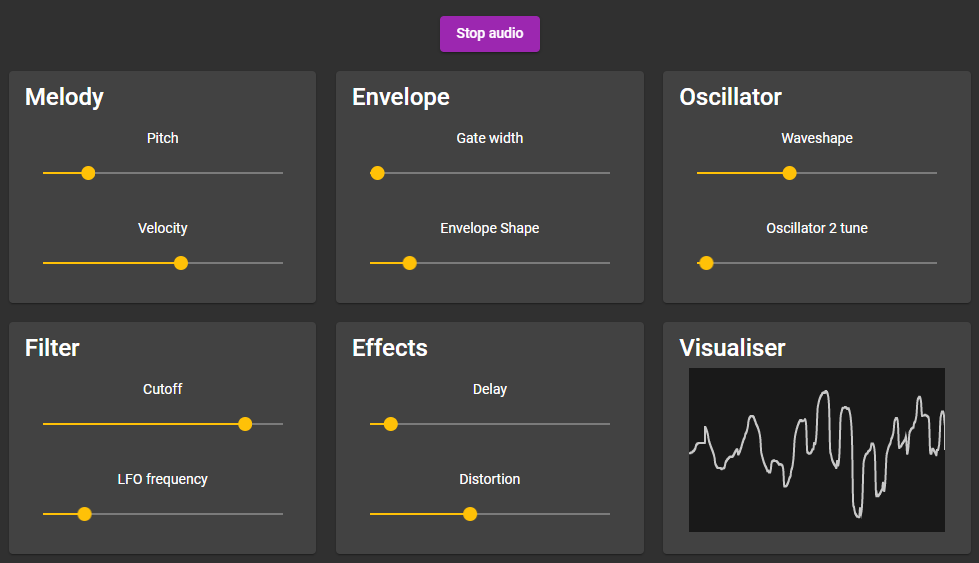

Once I was satisfied with the selection of the controls, their ranges and their distribution between different users I have started moving my idea into web browser. I have decided to use Web Audio API for this purpose. Since I had no prior experience with this API I had to learn it by trying. I have created a simple front-end interface that would expose the controls of the audio engine.

The application code is available on my GitHub and the live demo is available here. I have created this application in Angular using TypeScript. For the final version of the project I have translated the audio engine into JavaScript.

The result

Final version of the complete Unity project is available on my GitHub. The live version of the gesture controlled collaborative instrument is available here.

Leave a Reply